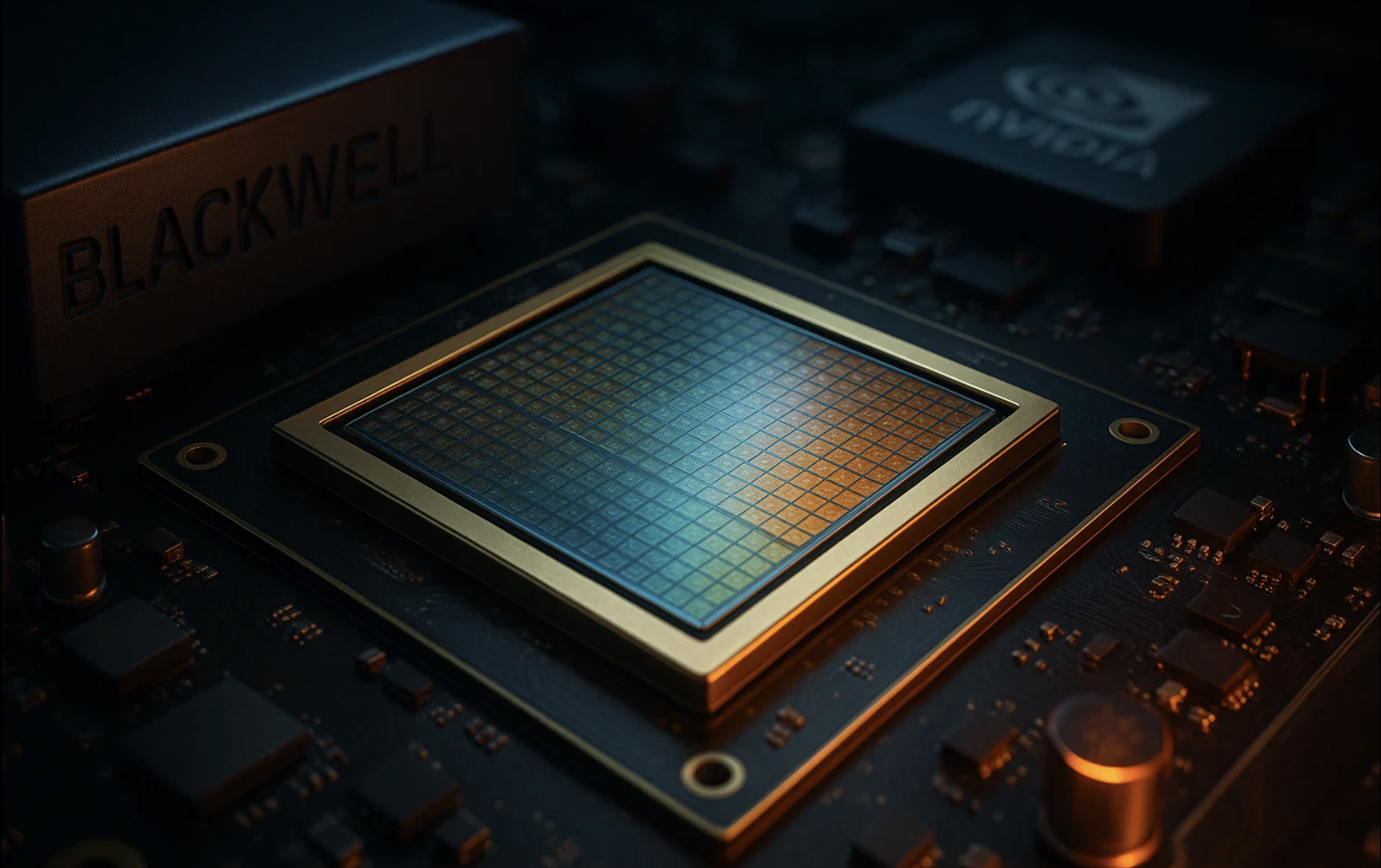

Inside Nvidia Blackwell: The Chip That Could Dominate the AI Race

In the fast-moving world of artificial intelligence (AI), hardware performance often determines which companies lead and which struggle to keep pace. Nvidia’s Blackwell architecture represents the company’s boldest step yet in capturing dominance over the AI chip market—a market expected to grow into the trillions as enterprises, governments, and cloud providers scale their adoption of machine learning and generative AI.

For investors, technologists, and enterprises, Blackwell is not just another product cycle—it is a statement about Nvidia’s ability to shape the future of computing itself.

What Is the Nvidia Blackwell Processor?

The Blackwell GPU architecture is Nvidia’s successor to the highly successful Hopper line, which powered many of the world’s most advanced AI models. Blackwell is designed to deliver massive leaps in throughput, efficiency, and scalability, specifically tailored to the demands of generative AI, large language models, and advanced scientific computing.

Key highlights include:

-

Higher AI Throughput: Optimized for training trillion-parameter models with unprecedented speed.

-

Energy Efficiency Gains: Lower power consumption per computation, a critical factor for large data centers.

-

Scalability: Designed for integration into supercomputers, hyperscale data centers, and enterprise AI clusters.

-

Advanced Memory Architecture: Enables faster communication between GPUs, reducing bottlenecks in AI workloads.

For enterprises building generative AI platforms, Blackwell represents a way to handle ever-larger models without prohibitive cost or energy requirements.

Why Blackwell Could Dominate the AI Market

Nvidia already holds the lion’s share of the GPU market for AI, but Blackwell is designed to solidify and extend that lead. The reasons are clear:

1. First-Mover Advantage in Generative AI

Generative AI requires enormous computational resources. By releasing Blackwell at a time when demand for AI infrastructure is skyrocketing, Nvidia positions itself as the default provider for training and inference at scale.

2. Integration With CUDA and Software Ecosystem

Hardware alone does not win markets. Nvidia’s CUDA software stack, along with libraries for AI, simulation, and scientific computing, makes Blackwell plug-and-play for enterprises already invested in the ecosystem.

3. Partnerships With Cloud Providers

Major cloud platforms such as Amazon Web Services, Microsoft Azure, and Google Cloud are expected to integrate Blackwell GPUs into their AI offerings. This ensures global availability and immediate adoption.

4. Competitive Gap With Rivals

While AMD and Intel are also investing heavily in AI accelerators, Nvidia’s pace of innovation and software integration gives it a substantial edge. Blackwell widens that gap further, especially for workloads that demand both performance and reliability.

Blackwell’s Role in the AI Race

The global AI race is not just about technology—it is about economic influence, national competitiveness, and long-term dominance in digital infrastructure.

-

For Enterprises: Blackwell enables faster product development cycles, from AI-driven customer experiences to advanced data analytics.

-

For Governments: Nations see access to cutting-edge AI chips as strategic assets, fueling innovation in defense, healthcare, and research.

-

For Investors: Nvidia’s continued leadership makes it a focal point in technology portfolios, driving discussions around valuation and long-term growth potential.

With Blackwell, Nvidia is signaling that it intends to remain not only a hardware vendor but also a critical enabler of global AI progress.

Challenges and Risks

Despite its advantages, Blackwell’s path is not without challenges:

-

High Costs: Advanced GPUs remain expensive, potentially limiting access for smaller enterprises.

-

Supply Chain Dependencies: Semiconductor manufacturing relies heavily on global supply chains, particularly foundries like TSMC.

-

Geopolitical Risks: Export controls and trade restrictions could impact availability in certain regions.

-

Rising Competition: While Nvidia leads today, competitors are aggressively innovating to capture a share of the AI chip market.

Investors and enterprises must balance enthusiasm with awareness of these structural risks.

Industry Impact and Future Outlook

The release of Blackwell is expected to accelerate adoption of AI in industries ranging from healthcare and finance to manufacturing and telecommunications. Faster model training and inference mean that applications once considered too resource-intensive can now become practical at scale.

For example:

-

Healthcare: AI models for drug discovery and diagnostics can process larger datasets more efficiently.

-

Finance: Algorithmic trading and fraud detection benefit from reduced latency and greater model complexity.

-

Retail and Marketing: Personalized customer experiences can be delivered in real time with AI-powered insights.

-

Telecommunications: Network optimization and predictive maintenance improve with faster machine learning workloads.

These ripple effects make Blackwell not just a product launch, but a catalyst for innovation across multiple sectors.

Final Thoughts

Nvidia’s Blackwell processor is more than just a chip—it is a strategic weapon in the global AI race. By combining cutting-edge hardware with an unmatched software ecosystem, Nvidia has positioned itself at the center of enterprise AI adoption.

For investors, Blackwell reinforces Nvidia’s role as one of the most important technology companies of this decade. For enterprises, it offers a path to accelerate innovation while managing costs and scalability. And for the broader economy, it signals the continued fusion of AI, semiconductors, and digital transformation.

The AI race is far from over, but with Blackwell, Nvidia has set a new benchmark that competitors will struggle to match.